However, for rest of the month, the traffic is expected to be low. You expect a spike in customer inquiries at the end of March, before tax filing deadline. Let’s assume you are running a chat-bot service for a payroll processing company. In this example, the data processing charges apply to the request and response body, but not to the data transferred to/from Amazon S3. Inference outputs are 1/10 the size of the input data, which are stored back in Amazon S3 in the same Region. The size of each invocation request/response body is 10 KB, and each inference request payload in Amazon S3 is 100 MB. The endpoint processes 1,024 requests per day. Therefore, you are charged for 2.5 hours of usage per day. In this example, the endpoint maintains an instance count of 1 for 2 hours per day and has a cooldown period of 30 minutes, after which it scales down to an instance count of zero for the rest of the day. The ml.c5.xlarge instance in the endpoint has a 4 GB general-purpose (SSD) storage attached to it. The endpoint is configured to run on 1 ml.c5.xlarge instance and scale down the instance count to zero when not actively processing requests. The model in example #5 is used to run an SageMaker Asynchronous Inference endpoint.

For input payloads in Amazon S3, there is no cost for reading input data from Amazon S3 and writing the output data to S3 in the same Region. When not actively processing requests, you can configure auto-scaling to scale the instance count to zero to save on costs.

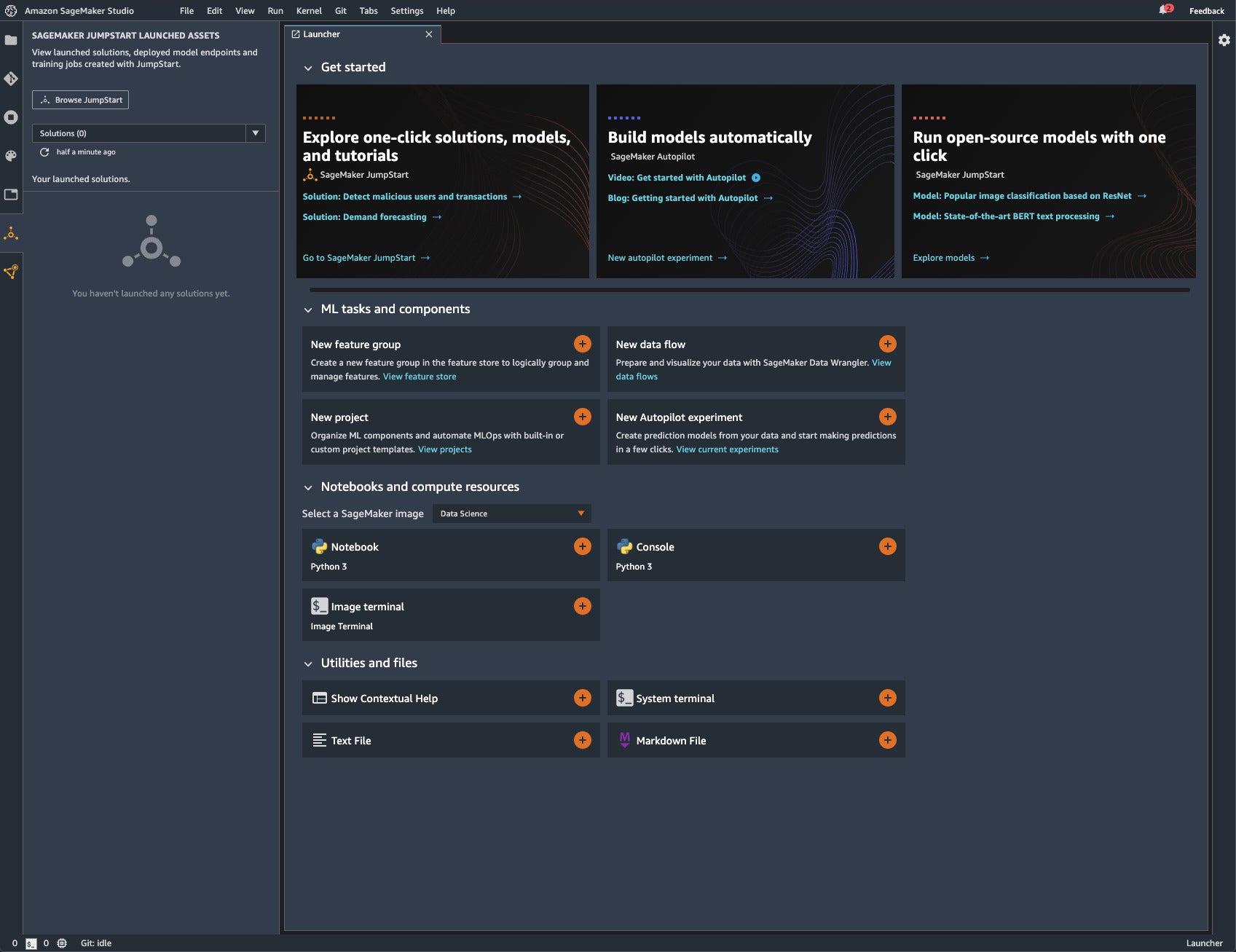

Amazon SageMaker Studio LabĪmazon SageMaker Asynchronous Inference charges you for instances used by your endpoint. You pay only for the underlying compute and storage resources within SageMaker or other AWS services, based on your usage.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed